I'm trying to write a java application that accesses the usb ports to read from and write to a device connected through usb. The problem I face is that I don't know what exactly to use in java to do such a thing. I searched online and found something called JUSB but all the posts seem fairly old. Currently I'm using the RXTX libraries but I sometimes run into some sync error.

When I use C# to do the equivalent it requires far less code and I don't face any of the same sync error. My question is, is there anything built into the latest version of the JRE I can use to access the usb ports ( that is just as easy as the equivalent C# code)? There is nothing equivalent to C#'s USB support in Java.

Both jUSB and Java-USB are severely out-of-date and likely unusable for any serious application development. If you want to implement a cross-platform USB application, really your best bet is to write an abstract interface that talks to Linux, Mac and Windows native libraries that you'll have to write yourself. I'd look at to handle Mac and Linux. Windows, as you've seen, is pretty straightforward.

I just came off a year-long project that did just this, and unfortunately this is the only serious cross-platform solution. If you don't have to implement on Windows and your needs are limited, you may get by with one of the older Java libs (jUSB or Java-USB). Anything that needs to deploy on Win32/Win64 will need a native component.

I hesitated posting this issue, because I can't provide much in the way of help in figuring out what is the underlying cause. However, I can reproduce it consistently. I've also posted this as a question to StackOverflow: I've copied the important parts from that post here: I recently switched from RXTX to PureJavaComm. However, I have started noticing seemingly random corrupt byte reads with PureJavaComm on linux.

The corruption does not occur when using RXTX. I can switch back and forth very easily and quickly on same computer with same hardware. Every time, I will quickly see random corrupt byte reads with PureJavaComm (although not immediately or continuously), and no issues using RXTX. I am using the 0.0.13 version of PureJavaComm, and I've been running with multiple variants of RXTX.

The corruption does not seem to occur on Windows 7 (32 or 64). The test linux box is a small Zotac server running Ubuntu 12.04 32 bit connected to custom hardware via a usb-serial connector. I open the serial port like this.

In the basic version of this is lacking, because studio Rockstar not acquired the necessary licenses. Modification of gives them the appropriate names and change their models on those based on real. Gta san andreas pc cracked. For this reason, only the cars resemble the ancestors.

String line = buffReader.readLine; // blocks until new line char received The read happens in it's own thread so that I can handle timeouts manually (because setReceiveTimeout never seemed to work consistently across platforms in RXTX). There is also an output stream, but after a few commands sent at initialization, it's not used. The corruption is evident in the line variable. Sometimes it is a sequence of corrupt (non-ascii) characters, sometimes it is just one character in the middle of the line.

I haven't yet determine if it is always the same character (such as a NUL). So far I only know it's corrupt because viewing it in vi shows a '^@' symbol where the character(s) would normally be. I would suspect a serial port timing problem.

However, that doesn't make sense in this case because RXTX seems to have no problem with it. If you have any suggestions on how I might debug this, they would be appreciated. Your thoughts on buffer overflow look potentially relevant. This particular hardware is sending lines of verbose data, but only at periodic intervals.

I'll try ideas from your notes above and report back next week. (Thanks for your pointer on BufferedReader's inefficiency for typical serial port reading. However, at 1st glance I don't see how a more efficient approach can be devised when the protocol is ASCII character oriented and it sends lines of arbitrary lengths in groups at periodic intervals. It does not send a line length value, so I never know how many bytes can be read without potentially blocking until the next interval.

I would never write a serial protocol like that myself, but in this case I don't have control over it.). Some further thoughts: Reading RXTX source code for Windows (termios.c) function readserial it looks to me (meaning I've not actually executed the code so this maybe wrong conclusion) that it ignores VMIN parameter and always reads as much as requested or timeouts. I did not have a look how this is handled at higher levels in RXTX but this seem like violation of how termios is supposed to work. Be that as it may, this might explain why RXTX behaves 'better' here. BufferedReaderd attempts to read 8192 bytes and so in RXTX it will return from the read when that many bytes have been read or the timeout kicks in.

This behavior, though it looks to me like a violation of the JavaComm spec, is much more efficient in of course, it also means that it will block forever if the data flow stops and there is no timeout. If above is correct it looks to me that your code (as one might expect) does not care weather you get the data 'as it comes in' or only after the BufferedReader internal buffer is full, because it looks like you actually get the lines only when the buffer is ful (in RXTX on Windows)l. That being the case you might be able get better performance from PJC if you enable the threshold with say for example value of 100 bytes. You might need to enable the timeout too, but if your data flow is continuos that should no make a difference. I've updated to 0.14, but have same issues. I think you might misunderstand the nature of the protocol I'm working with, and thus why BufferedReader still makes sense for me to use, and why setting threshold should not have any effect. Trying to state it more clearly: I must read lines of ascii strings from the serial port.

The lines are ASCII encoded and are terminated by CRLF characters. I do not have any indication as to length of the incoming strings, so I must read one byte at a time from the serial port, blocking until I finally find the LF character at which point I know I have an entire string and can process it. With this in mind, I think using BufferedReader makes sense and setting threshold beyond one byte should have no effect. I did try setting threshold to '1' though, instead of just disabling it, just-in-case.

If it helps, this is a sample of the lines of character data I'm receiving when the 'corruption' occurs. In every case, it's as if a new byte gets inserted in to the stream. Once one corrupt byte gets inserted, then subsequent lines are much more likely to also have a corrupt byte. //A few hundred lines might have been read without corrupt characters before this. On 18.02, kaliatech wrote: I've updated to 0.14, but have same issues. I think you might misunderstand the nature of the protocol I'm working with, and thus why BufferedReader still makes sense for me to use, and why setting threshold should not have any effect.

I don't think I misunderstood you. What I was saying is: I think RXTX works for you because RXTX does NOT work the way it is supposed to. BufferedReader will try to read 8192 bytes from the input stream. RXTX will read that much, although according to the JavaComm spec it should return as soon as any data (one byte) is available. This makes BufferedReader to work more efficiently as it is not reading stuff one char by one char but in large chunks. And because it works for you with RXTX I don't think your application cares that in practice it is not receiving the lines as soon as they come but only after the buffer is full.

You would not notice it the data keeps coming and you just keep reading. Like I said, I've not verified above by running code, just looked into the RXTX code and BufferedReader code, so I maybe totally wrong. However, if above is true, then you can set the threshold to the maximum (255) and that should increase the performance hundredfold or something. So please try that. Just to further explain and verify this is not related to BufferedReader, I also modified my read code to look like this.

I set threshold to 255. It made no difference except to sometimes block temporarily, likely due to threshold not being met until next batch of bytes were sent from device. This is as I would expect.

I also refactored code to use the raw inputstream returned from SerialPort rather than a Reader. It also did not seem to make a difference.

I did not expect that it would. My understanding of BufferedReader and InputStreamReader seems to differ from yours.

Regardless, I don't think it's important for this issue. The issue still occurs when using the raw input stream. I agree that my last code snippet is a very inefficient way to read bytes from the serial port.

However, at this point the issue is not one of efficiency, but of reliability and correctness. All variants of this code continues to work fine and reliably using PJC on Win7, or rxtx on both platforms. It generates these corrupts bytes and other weirdness only with PureJavaComm on linux. (I say other weirdness because I have also noticed that the serial inputstream periodically seems to 'block'.which I think in the way my program loops means that inputstream.avail is constantly returning zero until all of a sudden it releases a backlog of bytes. But I'm not postive on that yet. Again, it happens only with linux/PJC.) I will soon be writing a simulator that can simulate the hardware output over virtual com ports.

Once I have that, it will be interesting to see if I can still replicate the issue. I just noticed an interesting pattern in the log output whenever the issue occurs that might be helpful in determining root cause. The pattern does repeat itself, kind-of. Hi, might be educational to just do a simple loop that receives data using nothing but InputStream.read(byte) and collect the results to a large buffer for some minutes and the dump that as hex values. Meanwhile last night I create a simple self contained test case that uses two threads to send and receive messages (200 bytes each) as fast as possible, ie one thread just sends stuff with no delay, no handshake and on other thread reads them with threshold set to message length. You can access the code at: On my 2.6 GHz MacBook Pro running both Mountain Lion and Ubuntu under Parallels virtual machine using FTDI UBS/serial dongle at 230.000 baud I get an average baud rate of 229737 (varies of course) for 500 messages, with no transmission errors. You might try that code to see if you can reproduce your problem with it.

If you can you could then use it as a base for seeing what gets lost and where by capturing the data to a buffer, as I suggest above. I had my program running for the past few days without interruption. What I noticed is that the errors will only start to occur with certain types of messages that the device sends. (Overnight the device does not send these specific types of messages, and during that time no corrupt bytes were received.) So, the issue would seem to be device specific. The thing I can't explain is why it occurs with PJC, but not rxtx. Regardless, at this point it is not worth troubleshooting further until/unless I can replicate the issue outside of this specific device using a simpler test case.

I will try your loop program above, however, I expect if there actually is an issue specific to PJC, it will be somehow dependent on the types of messages and associated timing being sent by this device. Also, there is some risk in using a VM as you are doing to verify if the issue is repeatable. I've noticed that certain types of serial port problems do not replicate when using a VM, likely due to how the serial port is proxied between the guest and host. I can/will try in VM though to see if the issue still occurs.

If okay with you, I will close this issue for now. And then if/when I am to replicate with a simpler test case, I will create a new issue. (It might be a while before I have time to try doing so.).

I'm okey with closing this, you're welcome to re-open it anytime. Yes, I know virtual machine is not ideal for testing though it often makes issues worse so it it is kind of worst case test, but always.

But that is what I have atm. Regarding you problem one thing that comes to mind is that it maybe that PJC initialized something differently and thus maybe in your case this makes a difference. There was one issue where PJC did not initialize some field or another and this caused issues with CR and LF being 'eaten' away from the data stream. Is all you data printable ASCII or are there control characters ( less that 20 hex)? All data sent from the device should be printable ASCII, excluding the CR/LF characters at the end of each line. That seems to be the case when using rxtx.

Also, I just used to log the raw data being sent by the device with out rxtx/PJC/java in the loop. After startup (in which I can see the device does send a sequence of NUL bytes), no NUL bytes were seen after monitoring it for a good fifteen minutes. I then switched back to PJC and NUL bytes where again reported per the above. I think that confirms that PJC is somehow inserting NUL bytes in to the stream, or at least is directly responsible for doing something that causes NUL bytes to get inserted in to the stream. The fact that it only happens with certain types of messages though, in my specific case, is really perplexing. The only obvious difference in the message 'types', is that the problematic messages are a couple bytes longer.

A non obvious difference, and probably more important, is the timing of the messages. The problematic messages come in faster when they are being generated and there is a lot more data being sent for a given time period.

So once again, it would possibly seem to be a buffer overflow type issue. However, the fact that it is periodic and happens over a length of time where no problems occur also seems perplexing. I'm getting close to having a simulator working. Once I have that, I'll be able to test a number of data flow scenarios more easily and hopefully I'll be able to replicate the issue consistently. On 8.4.2012 19.37, Alistair Bell wrote: All data sent from the device should be printable ASCII, excluding the CR/LF characters at the end of each line.

That seems to be the case when using rxtx. Also, I just used to log the raw data being sent by the device with out rxtx/PJC/java in the loop. After startup (in which I can see the device does send a sequence of NUL bytes), no NUL bytes were seen after monitoring it for a good fifteen minutes. I then switched back to PJC and NUL bytes where again reported per the above. I think that confirms that PJC is somehow inserting NUL bytes in to the stream, or at least is directly responsible for doing something that causes NUL bytes to get inserted in to the stream.

Good to get confirmation on that though I never doubted that. The fact that it only happens with certain types of messages though, in my specific case, is really perplexing. The only obvious difference in the message 'types', is that the problematic messages are a couple bytes longer.

A non obvious difference, and probably more important, is the timing of the messages. The problematic messages come in faster when they are being generated and there is a lot more data being sent for a given time period. So once again, it would possibly seem to be a buffer overflow type issue. I agree that it must something like that. However, the fact that it is periodic and happens over a length of time where no problems occur also seems perplexing. I'm getting close to having a simulator working. Once I have that, I'll be able to test a number of data flow scenarios more easily and hopefully I'll be able to replicate the issue consistently.

Good, look forward to getting some more data to chew. One thing you could try would be to hack the PureJavaComm.InputStream.read to full fill the buffer with some known non zero value and the InputStream internal buffer with some other non zero value so that you could see if it is PJC or the OS that fills the buffer with zeros.

Have you tried a pure read(byte) approach for testing, meaning getting as little machinery between your code and PJC code? One thing that comes to mind is that if PJC somehow would return wrong number of bytes (too many) then this might cause cause something either in your code or BufferedReader to 'invent' zeros into to stream. I'm closing this issue for now because:. The comments above got too lengthy to read through. I'm still not 100% this is a PJC issue. I don't have the time to dedicate to debugging further at this time.

To restate the underlying issue more simply:. It seems that PJC is inserting 0x00 (NUL) bytes in to the inputstream in certain use cases while running on linux.

Java Serial Port

I really would prefer to use PJC over rxtx as it just seems like a fundamentally better approach. When I get some time, I will try generating a simple standalone test case to replicate the issue. If successful, I'll open a new ticket.

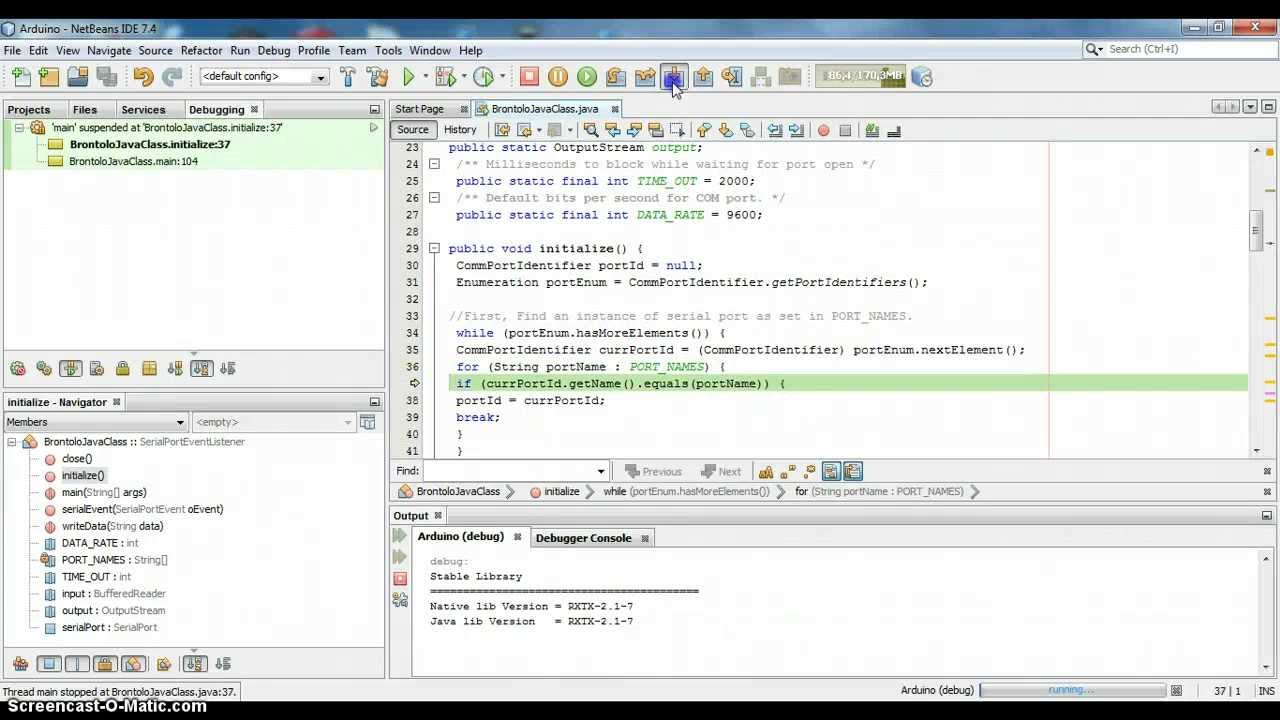

I have a Serial-to-USB device with a similarly named device driver in the Windows device manager. The devices do not always grab the same COM port on system boot, so my program needs to identify it on start up. I've tried using to enumerate the COM ports on the system, but this didn't work because CommPortIdentifier.getName simply returns the COM name (eg. COM1, COM2, etc.) I need to acquire either the driver manufacturer name, or the driver name as it appears in the device manager, and associate it with the COM name. Can this easily be done in Java? (I'd be interested in any 3rd party Java libraries that support this.) Otherwise, how I could begin to accomplish this via the win32 API?